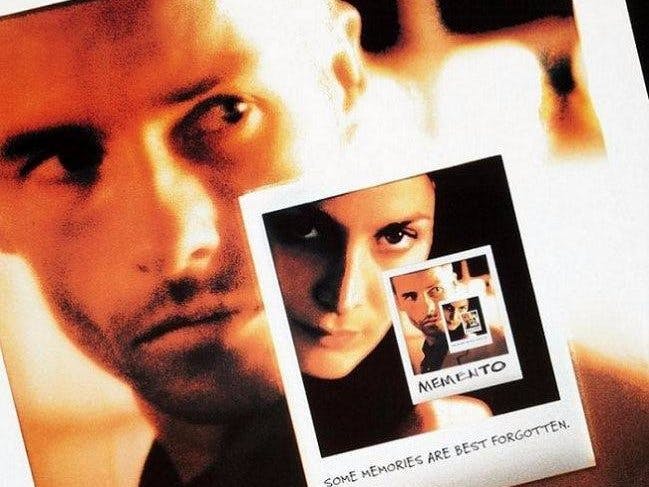

Memento (2000) - A man, suffering from short-term memory loss, uses notes and tattoos to hunt for the man he thinks killed his wife.

Ghajini (2005 in Tamil/Telegu, 2008 in Hindi) - Hit by an iron rod, a tycoon suffers from a condition that prevents him from remembering anything beyond fifteen minutes. With notes tattooed on his body, he sets out to find his fiancee's killer.

A person suffering from such disorder has to carry photos or notes of people he can trust. This is highly inefficient when you have a bundle to sort through and figure out things before it's too late.

But those were the days of 2G data connection.

A decade later, present day! We have Microsoft Azure Cognitive Service!Instead of carrying a bunch of images with notes, carry a phone app through which you click a picture and it tells you who is are there in front of you and any notes you have added - "My Manager"!

I have created this universal Windows app running on my phone to do the same. Take a picture from the phone camera or upload one from the gallery (just in case you received a tip on What's App). The application uses Azure Cognitive Service to find information about the people in picture.

Awful UX. I am sure someone with the right skills can improvise this.

Setting AccountsYou need Cognitive Service Subscription Key for Face Preview and Azure Storage Account keys hosting Azure function to create thumbnails. Azure function to generate thumbnails has been detailed in a separate article [1].

https://www.microsoft.com/cognitive-services/en-US/subscriptions

Application has a landing page. It allows you to take a picture from device camera or pic one from the device gallery. There are links to the Settings Page and switching between Gallery and Camera.

This code below takes images from the camera/gallery and uploads to a blob storage. This blob is passed to a cognitive service to detect the face region. Then for each face we make, another cognitive service call it to find a match in our trained groups.

private async void FaceQuery(StorageFile file)

{

CloudBlockBlob blob = null;

string blobFileName = null;

if (null != file)

{

txtResponse.Text = "";

progressRingMainPage.IsActive = true;

BitmapImage bitmapImage = new BitmapImage();

IRandomAccessStream fileStream = await file.OpenAsync(FileAccessMode.Read);

bitmapImage.SetSource(fileStream);

CapturedPhoto.Source = bitmapImage;

CapturedPhoto.Tag = file.Path;

blobFileName = System.Guid.NewGuid() + "." + file.Name.Split('.').Last<string>();

await HttpHandler.tempContainer.CreateIfNotExistsAsync();

BlobContainerPermissions permissions = new BlobContainerPermissions();

permissions.PublicAccess = BlobContainerPublicAccessType.Blob;

await HttpHandler.tempContainer.SetPermissionsAsync(permissions);

blob = HttpHandler.tempContainer.GetBlockBlobReference(blobFileName);

await blob.DeleteIfExistsAsync();

await blob.UploadFromFileAsync(file);

string uri = "https://api.projectoxford.ai/face/v1.0/detect?returnFaceId=true";

string jsonString = "{\"url\":\"" + HttpHandler.storagePath + "visitors/" + blobFileName + "\"}";

HttpContent content = new StringContent(jsonString, Encoding.UTF8, "application/json");

HttpResponseMessage response = await HttpHandler.client.PostAsync(uri, content);

if (response.IsSuccessStatusCode)

{

string responseBody = await response.Content.ReadAsStringAsync();

if (null == globals.gPersonGroupList)

globals.gPersonGroupList = await PersonGroupCmds.ListPersonGroups();

List<string> names = await VisitorCmds.CheckVisitorFace(responseBody, globals.gPersonGroupList);

txtResponse.Text = string.Join(", ", names.ToArray());

}

else

{

string responseBody = await response.Content.ReadAsStringAsync();

globals.ShowJsonErrorPopup(responseBody);

}

await blob.DeleteAsync();

progressRingMainPage.IsActive = false;

}

}

For this setup to work, we need to train this system with photos of people. As explained in a previous article [4], we organize people in groups and associate pictures to these people. We train each of these people groups for the search to work. There is no need to save the images of people after training is done. In my case, I have saved the thumbnails so I can check/delete images and update training during this experiment.

Application Pages:

- Person Group Page

- Persons Page

- Person Face Page

These pages have the code to manage Person Groups, Persons and associated Person Face. This is also documented in [4]. As part of the application setup, one needs to create a Person Group, add a Person and add a Face to the person. While adding the person's face, ensure that you use pictures with only one face. For a picture with multiple faces, we need to provide the rectangle which has the face of interest, but I have not added that to the app.

Results

Comments