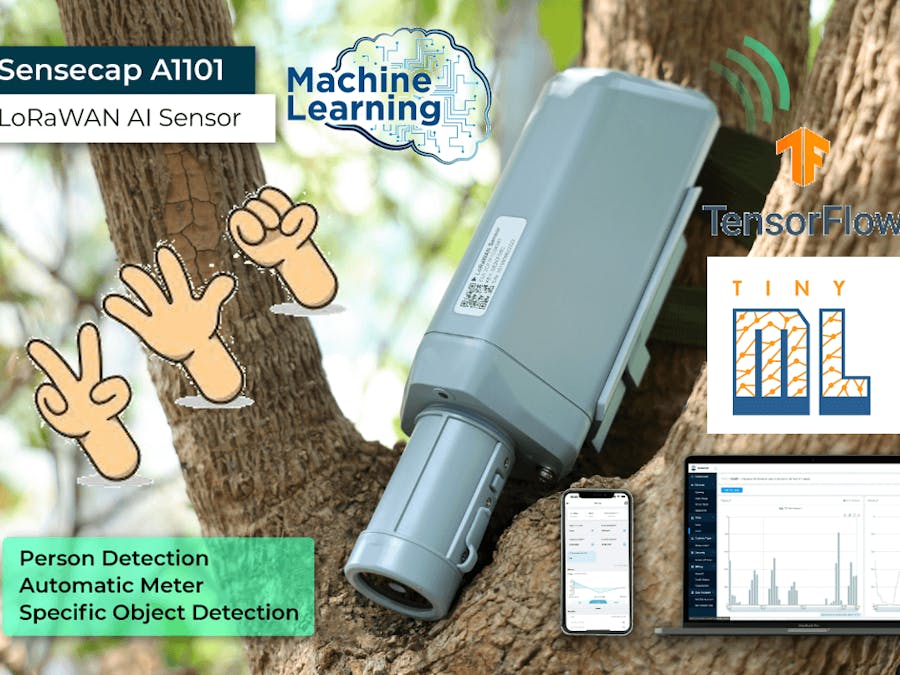

SenseCAP A1101 - LoRaWAN Vision AI Sensor is a TinyML Edge AI-enabled image sensor. It supports training models with TensorFlow Lite without writing a line of code, enabling developers or enthusiasts without advanced AI training experience like myself to learn AI machine learning and train AI models for interesting AI applications.

In this project, I will show how I get started with the SenseCAP A1101 Vision AI Sensor, collect data, annotate and generate a dataset from Roboflow, train my own model to detect gestures with Google Colab and TensorFlow Lite, deploy the AI model and upload data to the SenseCAP Cloud platform.

If you are looking for a simple way to get some ideas about machine learning, and want to find a simple way to get started, hope this project would be helpful for you.

- SenseCAP A1101

- PC

- SenseCAP Mate App

- Hotspot: SenseCAP M1 / SenseCAP M2 Data Only hotspot

Note:

To have the AI result uploaded to the SenseCAP Cloud, you will need a SenseCAP M1 or SenseCAP M2 Data Only hotspot to build the LoRaWAN network coverage in your region. LoRaWAN hotspot could provide miles of range LoRa signal coverage if there are no buildings and other obstacles blocking the signal.

Software PreparationNow let's set up the software. The software setup for Windows, Linux and Intel Mac will be the same whereas for M1/M2 Mac will be different.

For Windows, Linux, Intel Mac

1. Make sure Python is already installed on the computer. If not, visit this page to download and install the latest version of Python

2. Install the following dependency

pip3 install libusb1M1/ M2 Mac

- Install Homebrew

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"- Install conda

brew install conda- Download libusb

wget https://conda.anaconda.org/conda-forge/osx-arm64/libusb-1.0.26-h1c322ee_100.tar.bz2- Install libusb

conda install libusb-1.0.26-h1c322ee_100.tar.bz2Download the SenseCAP Mate App

Download and install it on your mobile phone according to your OS

Step One: Collect Image DataConnect the A1101 to the PC via a type-C cable. Double-click the button to enter mass storage mode. Drag and drop this.uf2 file to VISIONAI drive. As soon as the uf2 finishes copying into the drive, the drive will disappear. This means the uf2 has been successfully uploaded to the module.

Copy and paste this Python script inside a newly-created file named capture_images_script.py on your PC. Execute the Python script to start capturing images

python3 capture_images_script.pyBy default, it will capture an image every 300ms. If you want to change this, for example, to capture an image every second, you could execute the script like this:

python3 capture_images_script.py --interval 1000After the above script is executed, SenseCAP A1101 will start to capture images from the in-built cameras continuously and save all of them inside a folder named save_img.

Change back the firmware

After you have finished recording images for the dataset, you need to make sure to change the firmware inside the SenseCAP A1101 back to the original one, so that you can again load object detection models for detection.

Enter Boot mode on SenseCAP A1101. Drag and drop this.uf2 file to VISIONAI drive according to your device. As soon as the uf2 finishes copying into the drive, the drive will disappear. This means the uf2 has been successfully uploaded to the module.

Step Two: Generate Dataset with RoboFlowRoboflow is an annotation tool based online. Click here to sign up for a Roboflow account and start a new project. Drag and drop the images that you have captured using SenseCAP A1101. Continue to annotate all the images in the dataset and generate a dataset. In the end, you will be able to export a dataset as a.zip file or code. (To learn more detailed instructions, please find the A1101 wiki here)

To continue the next step, we need to generate a new version of the dataset and export the code.

After we finish the annotate and get the dataset code, we need to train the dataset. Click here to open an already prepared Google Colab workspace, go through the steps mentioned in the workspace and run the code cells one by one.

Note: On Google Colab, in the code cell under step 4, you can directly copy the code snippet from Roboflow in the last step.

It will walk through the following:

- Setup an environment for training

- Download a dataset

- Perform the training

- Download the trained model

(For detailed illustrations, jump to this part which explains how to train an AI model using YOLOv5 running on Google Colab.)

In the end of this step, you will be able to download a.uf2 model file, which is ready to deploy in your A1101.

Step Four: Deploy the trained modelNow we will move the model-1.uf2 that we obtained at the end of the training into SenseCAP A1101.

Connect SenseCAP A1101 to your PC via a USB Type-C cable, double-click the boot button on SenseCAP A1101 to enter mass storage mode, and drag the model file to your A1101. As soon as the uf2 finishes copying into the drive, the drive will disappear. This means the uf2 has been successfully uploaded to the module.

Step Five: Verify the Model- Open SenseCAP Mate App. under Config screen, select Vision AI Sensor

- Press and hold the configuration button on the SenseCap A1101 for 3 seconds to enter Bluetooth pairing mode

- Click Setup and it will start scanning for nearby SenseCAP A1101 devices and click on the device SN found

- Go to Settings and choose "Object Detection" in the Algorithm, choose the cooresponding "user define" AI model, and click "Send".

- Go to General and click Detect (Do not miss this!)

- Click here to open a preview window of the camera stream

- Click Connect button. Then you will see a pop-up on the browser. Select the device (SenseCAP A1101 / Grove AI) and click Connect

- Now we will be able to view the real-time inference results!

The rectangle box shows the object that has been detected. And there are two numbers showing. The first number is to differentiate the different detected objects based on our labels. (e.g. 1 for rock, 2 for scissors, 3 for paper.) The second number is the confidence score in % for each detected object.

ConclusionAs our dataset has a limited quantity of images, here we could find that it has difficulty when differentiating the "scissors" and "paper", but it could perfectly detect the "rock" already. Even though we could not get a very satisfactory result from this model now, I believe that with more image input and a larger dataset, it will have more accurate results. I could not wait to retrain the model and test it again!

What would be your interesting idea of an image recognition application to play with the A1101? Welcome to share with me if you have any other thoughts!

Comments